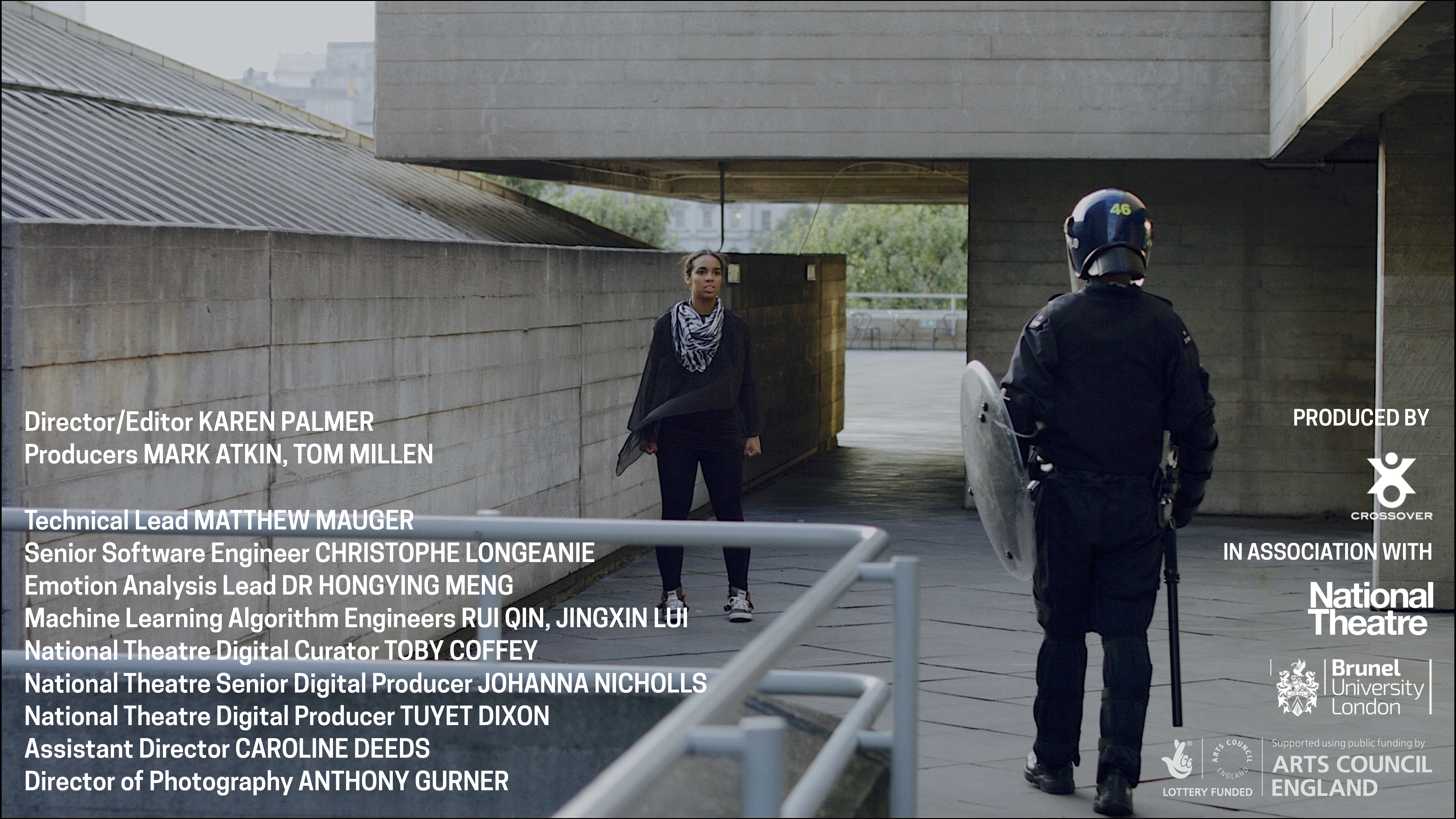

Riot (Prototype)

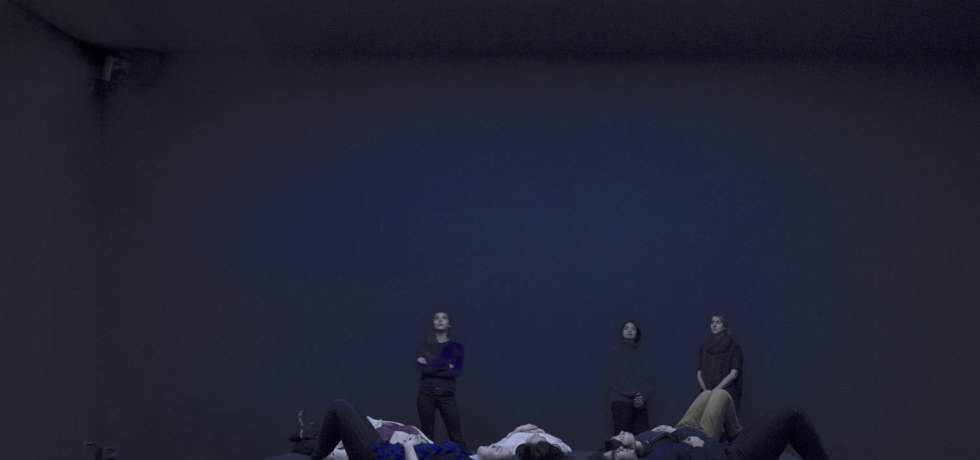

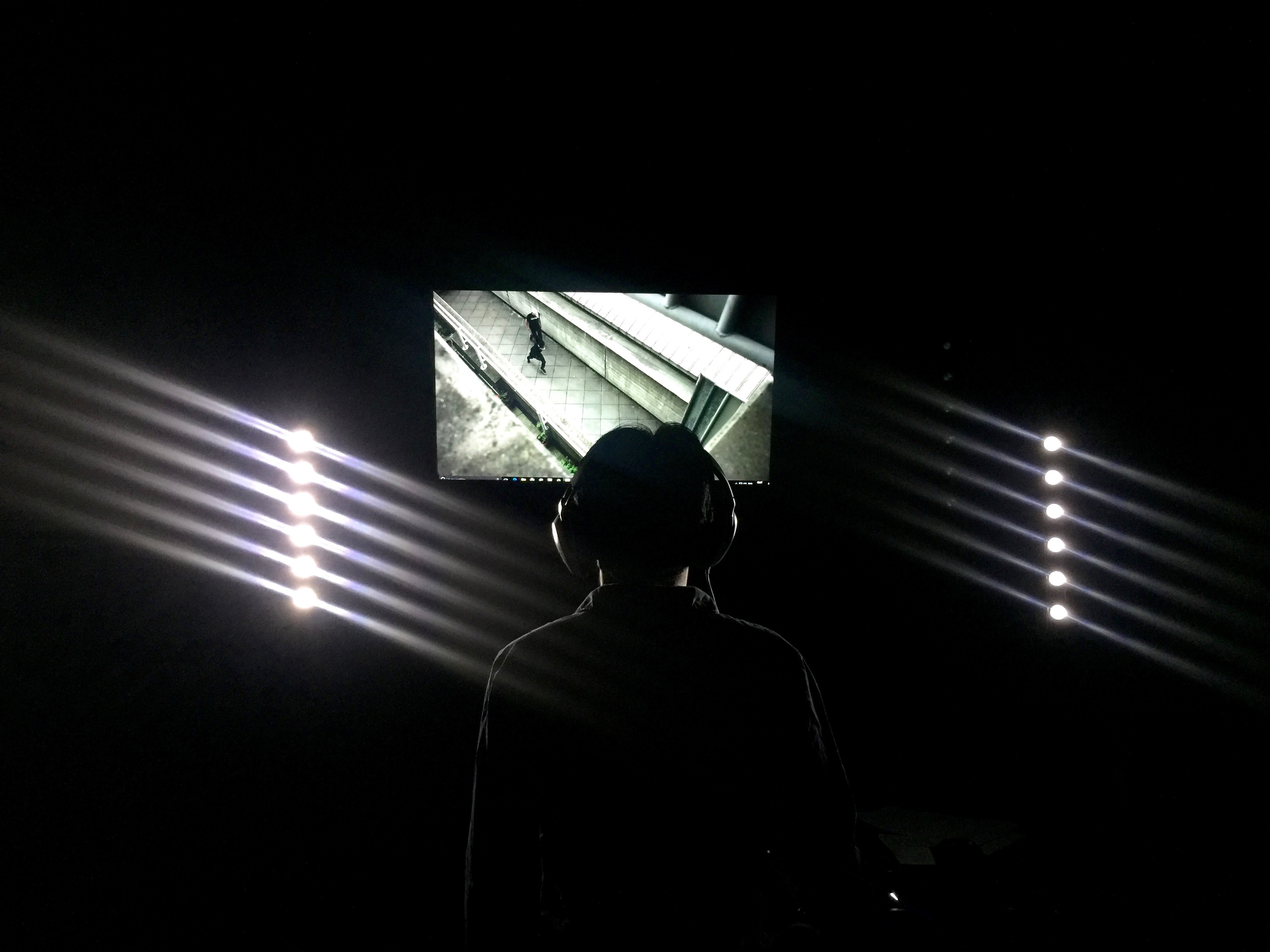

Viewing Riot (Prototype) at the Museo de Arte Contemporáneo in Lima.

Riot is an immersive video installation that takes place in a protest march that swiftly escalates into a dangerous riot. Riot responds to the participants’ emotional state in real time to alter their journey.

The objective is to get through a digitally simulated riot alive. This can be achieved by communicating with a variety of characters. But the narrative is governed by the emotional state of the user, which is measured by bespoke facial expression recognition software and by devices that monitor neurological activity. If the user becomes agitated, characters will become defensive or impatient and the user will be taken deeper into an unknown world.

Karen Palmer is a London-based film director and digital artist. Her work fuses emerging immersive storytelling technologies and diverse film genres, including documentary, music videos, short film, and experimental. Her neurogame Syncself2 received international exposure through exhibition at the Victoria & Albert Museum and Sheffield International Documentary Festival. In 2012, she was commissioned to create the interactive film Evolution as part of the Cultural Olympiad leading in to the London Olympics in 2012. She has spoken at such events as TEDx Australia, the Google Cultural Institute Paris, the Art and Machine Learning Global Summit, and the Games for Change Festival.

Palmer created Riot in response to the 2014 riots in Ferguson, Missouri, that followed the police shooting of 18-year-old Michael Brown. Riot was produced by Crossover in partnership with Brunel University and The National Theatre, London. It has been shown at the V&A, at the Future of Storytelling Summit in New York, and at Phi Centre Montreal. In 2017, Palmer was a TED Resident in New York and an A.I. Artist in Residence at ThoughtWorks.

“The same facial recognition technology that powers ‘Riot’ has been put to use by governments in Berlin and China to track individuals suspected of terrorism, and was enlisted in a recent controversial attempt to label people as gay or straight based on their facial features. As an independent artist, Palmer insists the key to equity and social justice is by making this technology widely available to ordinary people.”